Decentralized Computation Offloading scheme (DECOFFEE) for Energy Efficiency across ICOS Layers

The vast number of heterogeneous connected devices over the internet (Internet of Things – IoT), has created a new landscape in the era of broadband networks where various challenges must be dealt with. These include among others connectivity issues, threat prediction and mitigation, as well as dynamic load balancing during task execution to leverage reduced latency and green computing. For example, not all IoT devices have the processing capacity to execute advanced security protocols or computationally intensive applications.

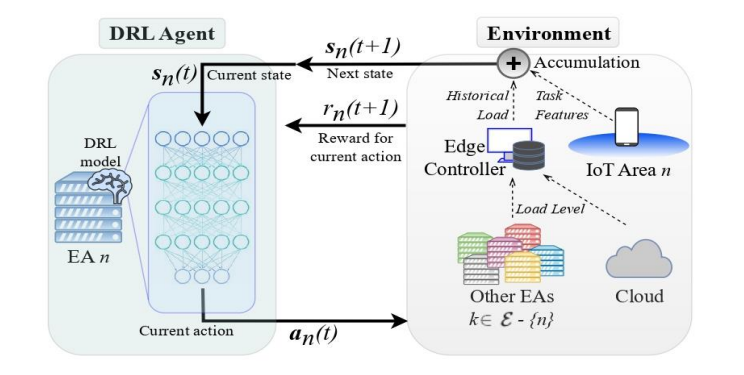

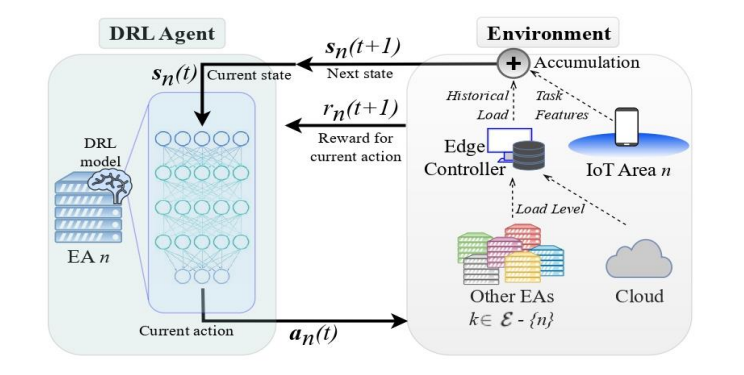

In the framework of ICOS, the Decentralized Computation Offloading scheme (DECOFFEE) has been developed, which is a framework that allows agents running on IoT devices to decide dynamically between local processing, horizontal offloading (to other edge agents), and vertical offloading (to the cloud). It ensures fair CPU sharing for offloaded tasks, balancing workload distribution across the continuum to maximize system performance and task completion rates. Functionally, DECOFFEE instantiates a deep reinforcement learning (DRL) model at each ICOS node for purposes of decision-making on incoming computational tasks. This agent decides on positive rewards, in case task offloading leads to improved energy consumption and CPU metrics or negative rewards in the opposite case.

The DRL functional architecture is shown in Figure 1, where each edge node agent receives as input the accumulated information of local task features received and the load of all the other available ICOS (edge/cloud) nodes via the ICOS Telemetry Controller. By inferencing the DRL model, each agent finally decides where to offload the task.

The DECOFFEE Model can be easily applied in Federated Learning (FL) scenarios. To this end, multiple participating nodes train locally an ML model, as in Figure 1, based on the data collected from their surrounding environment. Afterwards, ML model parameters, such as weights in case of NN training, are send to a master ML node for aggregation and update. The master node, after processing and aggregating all parameters, sends the updated weights to the participating nodes. Therefore, on one hand computational burden is divided among the participating nodes, and on the other hand no sensitive data is transmitted. Hence, privacy sensitive applications, such as e-health can now be deployed more easily.

[1] Giannopoulos, A., Paralikas, I., Spantideas, S., & Trakadas, P. (2024). HOODIE: Hybrid Computation Offloading via Distributed Deep Reinforcement Learning in Delay-Aware Cloud-Edge Continuum. IEEE Open Journal of the Communications Society.

[2] Giannopoulos, A., Paralikas, I., Spantideas, S., & Trakadas, P. (2024, September). COOLER: Cooperative Computation Offloading in Edge-Cloud Continuum Under Latency Constraints via Multi-Agent Deep Reinforcement Learning. In 2024 International Conference on Intelligent Computing, Communication, Networking and Services (ICCNS) (pp. 9-16). IEEE.

[3] Giannopoulos, A., Suárez-Cetrulo, A., Masip-Bruin, X., D'Andria F., & Trakadas, P. (2024). Placing Computational Tasks within Edge-Cloud Continuum: a DRL Delay Minimization Scheme. In 30th International European Conference on Parallel and Distributed Computing (Euro-PAR 2024), Next steps in IoT-Edge-Cloud Continuum Evolution: Research and Practice (IECCONT).

This project has received funding from the European Union’s HORIZON research and innovation programme under grant agreement No 101070177.